TOPIC INFO (UGC NET)

TOPIC INFO – UGC NET (Psychology)

SUB-TOPIC INFO – Research Methodology and Statistics (UNIT 2)

CONTENT TYPE – Detailed Notes

What’s Inside the Chapter? (After Subscription)

1. Correlation

1.1. Mutual Dependence

1.2. Cause and Effect Relationship

1.3. Types of Correlation

1.4. Pearson Product Moment Correlation

1.5. Spearman Rank Order Correlation

1.6. Partial Correlation

1.7. Multiple Correlation

2. Special Correlation Methods

2.1. Point Biserial Correlation (\r_{PB}\))

2.2. Phi Coefficient (Φ)

2.3. Biserial Correlation

2.4. Tetrachoric Correlation (\(r_{TET}\)).

3. Regression

3.1. Simple Linear Regression

3.2. Multiple Regression

4. Factor Analysis

4.1. Introduction

4.2. Types of Factor Analysis

4.3. Assumptions of Factor Analysis

4.4. Methods of Factor Extraction

4.5. Determining the Number of Factors

4.6. Rotation of Factors

4.7. Interpretation of Factor Analysis

4.8. Applications of Factor Analysis in Psychology.

4.9. Advantages of Factor Analysis

4.10. Limitations of Factor Analysis

Note: The First Topic of Unit 1 is Free.

Access This Topic With Any Subscription Below:

- UGC NET Psychology

- UGC NET Psychology + Book Notes

Correlation and Regression Analysis

UGC NET PSYCHOLOGY

Research Methodology and Statistics (UNIT 2)

Correlation

- The relationship between two are more more variables is called “correlation “ and the variables are said to be correlated. The relationship between two variables is also known as “ covariation”. The term relationship can be used in two different senses, viz, mutual dependence and cause and effect relationship.

Mutual Dependence

- Consider the two variables, rate of oxygen consumption and metabolism in organisms. When the oxygen consumption increases, there is increase in the metabolism as well. Similarly when the organism increases its activity (metabolism ) it consumes more oxygen.

- On the other hand, when the oxygen consumption decreases, the activity, i.e., the metabolism decreases. When the organism becomes less active, its oxygen consumption also becomes lesser.

- A relationship between two variables in which a change in the value of one of the two variables brings about a change in the value of the other variable is said to be ‘mutually dependent’.

Cause and Effect Relationship

- A relationship between two variables in which changes in the values of one variable is the cause of the changes in the values of the other variable is said to be ‘cause and effect relationship’ between the two variables. For example, consider the two variables, environmental temperature and the body temperature in the environment. When there is increase in the environmental temperature there is an increase in the body temperature.

- Here the increase in the environmental temperature is the ‘cause’ and the increase in the body temperature is the ‘effect’ .Such a relationship between two variables is known as ‘cause and effect’ relationship.

- The cause and effect relationship between two variables may be either direct or indirect. The nature of correlation between two variables need not be same at all times. For example, the relationship between height and weight of humans or the length and weight of organisms may not be same. Generally, with increase in the height or length, there is increase in the weight. However, it is common to see people who are tall weighing less and those who are short weighing heavier.

- Another important thing to be remembered is about “ non- sense” or “ spurious” correlation between variables. Any two variables, which do not have any logical or biological basis but show a statistical correlation, are said to have non-sense or spurious correlation. For instance, you may find a correlation between the number of cellular phones and the number of mosquitoes in an area. Unless we are able to establish a logical or biological background for a relationship between the above variables, it is a spurious correlation.

Types of Correlation

- Correlation between variables may be simple or multiple. A simple correlation deals with only two variables where as a multiple correlation deals with more than two variables. We shall be discussing only the simple correlation. Correlation between two variables may be a positive correlation or a negative correlation. Whether it is positive or negative, it may be linear or non- linear.

Positive Correlation:

- A correlation between two variables in which, with an increase in the values of one variable the values of the other variable also increases, and with a decrease in the value of the one variable the value of the other variable also decreases, is said to be a positive correlation.

- In other words, in a positive correlation between two variables the values of both the variables move in the same direction. For example, the correlation between the environmental temperature and the body temperature of poikilotherms is a positive correlation.

Negative Correlation:

- A correlation between two variables in which when there is an increase in the values of one variable, the values of the other variable decreases, and when there is a decrease in the values of one variable the other variable increases, is said to be a negative correlation the values of the two variables move in opposite direction.

- For example, the correlation between environmental temperature and bacterial growth, having a cause and effect relationship, is negative one. With an increase in the temperature the bacterial growth declines and with a decrease in the temperature the bacterial growth increases.

Linear Correlation:

- When the values of two variables vary in a constant ratio, the correlation between the two variables is said to be linear. The correlation between the optical density and the intensity of the colour of a solution is an example of linear correlation.

- The relationship between two variables could be classified either as linear or curvilinear. Relationship between two variables x and y is said to be linear if graph between x and y is represented in the form of straight line whereas if graph is represented by a curve, it is curvilinear. Sine we shall confine our discussion in this book to linear relation only hence we can take a liberty to use the correlation term for linear relation.

Pearson Product Moment Correlation

- In order to measure the magnitude of linear relation between two variables, a coefficient known as product moment correlation coefficient is defined. It is denoted by r. For convenience we shall use the term correlation coefficient for product moment correlation coefficient.

- Product moment correlation coefficient is defined as an index which measures the linear relation between two variables and is denoted by r.

The formula for r is

$$r\;=\;\frac{n\sum xy\;-\;(\sum x)\;(\sum y)}{\sqrt{(n\sum x^2}\;-\;{(\sum x)}^2)\;(n\sum y^2\;-\;{(\sum y)}^2)}$$

Limits of Correlation Coefficient:

- The limit pf r is -1 to + 1 i.e., – 1 <= r <= 1 The value of r = 1 indicates the perfect positive linear relation between the two variables x and y. In such situation increase (decrease) in x by any amount is followed by the increase (decrease) in y by the same amount and vice versa. The relationship between x and y is represented by a straight line making a 45° angle with x axis and passing through origin.

- The value of r = – 1 indicates the perfect negative linear relation between the two variables x and y. Here any amount of increase (decrease) in x is followed by the decrease (increase) in y by the same amount and vice versa. The graph between x and y is a straight line making an angle of 45° with both the axis.

- r = 0 represents the absence of linear relation between x and y. Here increase or decrease in x does not effect y at all and vice versa. Here the graph) between x and y are straight lines either parallel to x or y.

Example:

Compute the product moment correlation coefficient between the variables.

| X | X² | Y | Y² | XY |

|---|---|---|---|---|

| 27 | 729 | 20 | 400 | 540 |

| 23 | 529 | 24 | 576 | 552 |

| 20 | 400 | 19 | 361 | 380 |

| 25 | 625 | 18 | 324 | 450 |

| 26 | 676 | 23 | 529 | 598 |

| 19 | 361 | 20 | 400 | 380 |

| 21 | 441 | 19 | 361 | 399 |

| 20 | 400 | 21 | 441 | 420 |

| 27 | 729 | 22 | 484 | 594 |

| 22 | 484 | 18 | 324 | 396 |

Σx = 230 Σx² = 5374 Σy = 204 Σy² = 4200 Σxy = 4709

Solution:

Here N=10

$$r\;=\;\frac{n\sum xy\;-\;(\sum x)\;(\sum y)}{\sqrt{(n\sum x^2}\;-\;{(\sum x)}^2)\;(n\sum y^2\;-\;{(\sum y)}^2)}$$

$$=\;\frac{10\;\times\;4709\;-\;230\;\times\;204}{\sqrt{(10\;\times\;5374\;-\;{(230)}^2})\;(10\;\times\;4200\;-\;{(204)}^2)}\\\\=\;0.2993$$

Spearman Rank Order Correlation

The Pearson’s correlation is calculated on continuous variables. Pearson’s correlation is not advised under two circumstances: one, when the data are in the form of ranks and two, when the assumptions of Pearson’s correlation are not followed by the data. In this condition, the application of Pearson’s correlations is doubtful. Under such circumstances, rank-order correlations constitute one of the important options. The ordinal scale data is called as rank-order data.

Rank correlation is used to find the linear correlation between the two variables where scores are in rank order. It is represented by a greek letter ρ (rho). The formula ρ for is ρ = \(1\;-\;\frac{6\sum D^2}{N\;(N^2\;-\;1)}\),

where, D: Difference between the Ranks

N: Number of Paired Scores

Rank correlation ρ is a non parametric statistic. Ranks assume only position of subjects or items in the series: no weightage is given for gaps or differences between adjacent scores. For example, individuals with scores 58, 56, 34 and 16 on a test would be ranked 1,2,3 and 4, although the difference between the first and second, second and third, and third and fourth scores are 2,22 and 18 respectively.

Rank-Order Data:

- When the data is in rank-order format, then the correlation that can be computed is called as rank order correlations. The rank-order data present the ranks of the individuals or subjects. The observations are already in the rank order or the rank order is assigned to them.

- Marks obtained in the unit test will constitute a continuous data. But if only the merit list of the students is displayed then the data is called as rank order data. If the data is in terms of ranks, then Pearson’s correlation need not be done. Spearman’s rho constitutes a good option.

Assumptions Underlying Pearson’s Correlation not Satisfied:

- The statistical significance testing of the Pearson’s correlation requires some assumptions about the distributional properties of the variables. We have already delineated these assumptions in the earlier unit. When the assumptions are not followed by the data, then employing the Pearson’s correlation is problematic. It should be noted that small violations of the assumptions do not influence the distributional properties and associated probability judgments. Hence it is called a robust statistics. However, when the assumptions are seriously violated, then application of Pearson’s correlation should no longer be considered as a choice. Under such circumstances, rank-order correlations should be preferred over Pearson’s correlation.

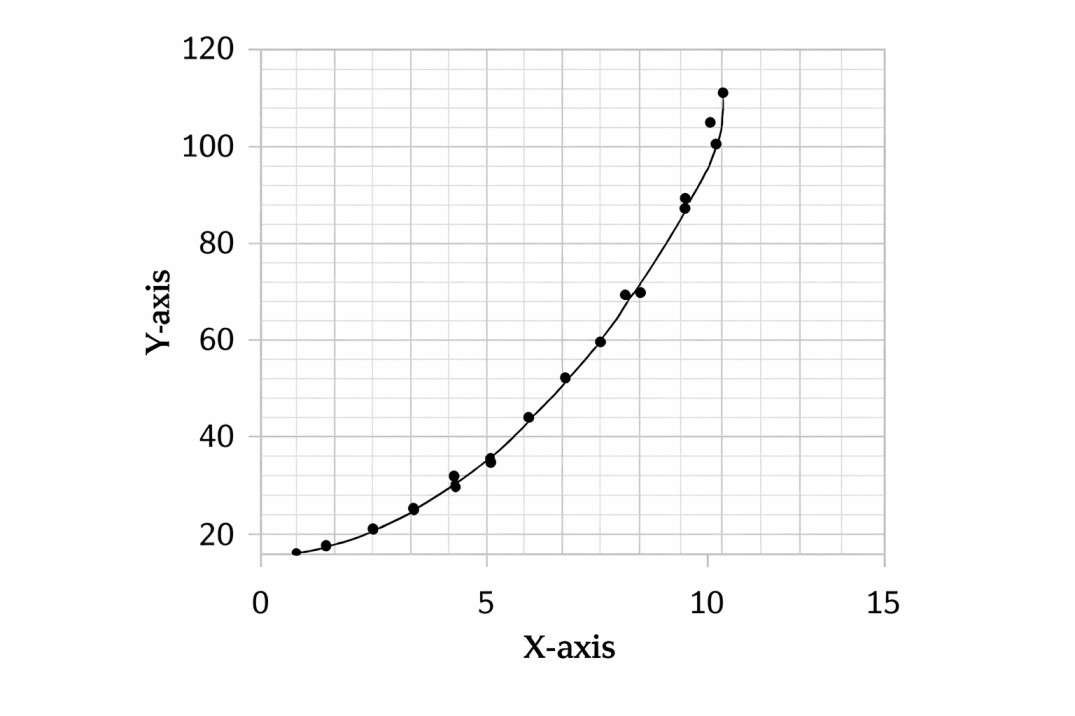

- It needs to be noted that rank-order correlations are applicable under the circumstances when the relationship between two variables is not linear but still it is a monotonic relationship. The monotonic relationship is one where values in the data are consistently increasing and never decreasing or consistently decreasing and never increasing. Hence, monotonic relationship implies that as X increases Y consistently increases or as X increases Y consistently decreases. In such cases, rank-order is a better option than Pearson’s correlation coefficient.

- However, some caution should be observed while doing so. A careful scrutiny of the figure indicates that, in reality, it is a curvilinear relationship (power function). So actually, the relationship between X and Y is not linear but curvilinear. Hence, curve-fitting is a better approach for such data than using the rank-order correlation. The rank-order can still be used with this data since the curvilinear relationship shown is also monotonic. It must be kept in mind that all curvilinear relationships would not be monotonic relationships.

Use of Rank Correlation:

- Often we come across a situation where sport performance can not be measured objectively due to lack of any existing objective criteria, in such a situation evaluation of athlete is done by means of grading. For example in evaluating the dribbling performance in basketball or judging the passing accuracy in soccer can be done only through ranks.

- In a situation like this correlation between such variables could be measured only through rank correlation. If scores on one variable are ranks and that of other’s are actual measurements, the rank correlation is obtained by converting the actual measurements into their ranks. Further if both the variables are in score form, p can be obtained by converting these scores into their corresponding ranks.

Example:

Consider an experiment in which judge’s marks were obtained. Calculate rank correlation between the two parameters.

| X | 15 | 13 | 12 | 14 | 16 | 11 | 10 | 12 |

|---|---|---|---|---|---|---|---|---|

| Y | 16 | 10 | 8 | 12 | 10 | 14 | 13 | 10 |

Solution: Computation of rank correlation between the two parameters is shown in the following table.

| X | Y | Rₓ | Rᵧ | D = Rₓ − Rᵧ | D² |

|---|---|---|---|---|---|

| Scores | Scores | ||||

| 15 | 16 | 2 | 1 | 1 | 1 |

| 13 | 10 | 4 | 6 | -2 | 4 |

| 12 | 8 | 5.5 | 8 | -2.5 | 6.25 |

| 14 | 12 | 3 | 4 | -1 | 1 |

| 16 | 10 | 1 | 6 | -5 | 25 |

| 11 | 14 | 7 | 2 | 5 | 25 |

| 10 | 13 | 8 | 3 | 5 | 25 |

| 12 | 10 | 5.5 | 6 | -0.5 | 0.25 |

ΣD² = 87.50

$$\rho\;=\;1\;-\;\frac{6\sum D^2}{N\;(N^2\;-\;1)}\\\\\rho\;=\;1\;-\;\frac{6\;\times\;87.5}{8\;(8^2\;-\;1)}\\\\=\;1\;-\;1.04\\=\;-0.04$$

Remarks:

- In the above illustration a tie occurs in the X score 12, so both the scores were ranked by means of averaging their ranks, giving each a rank of 5.5. In Y scores too, three scores i.e. 10 had been tied and thus the ranks were averaged and this average rank of 6 was assigned to each score.

Example:

Compute the rank order correlation.

| X | 3.4 | 4.1 | 4.2 | 3.5 | 4.3 | 3.3 | 2.1 | 4.8 | 3.4 | 2.5 |

|---|---|---|---|---|---|---|---|---|---|---|

| Y | 175 | 180 | 170 | 164 | 166 | 172 | 150 | 163 | 165 | 145 |

| X | Y | Rₓ | Rᵧ | D = Rₓ − Rᵧ | D² |

|---|---|---|---|---|---|

| 3.4 | 175 | 6.5 | 2 | 4.5 | 20.25 |

| 4.1 | 180 | 4 | 1 | 3 | 9 |

| 4.2 | 170 | 3 | 4 | -1 | 1 |

| 3.5 | 164 | 5 | 7 | -2 | 4 |

| 4.3 | 166 | 2 | 5 | -3 | 9 |

| 3.3 | 172 | 8 | 3 | 5 | 25 |

| 2.1 | 150 | 10 | 9 | 1 | 1 |

| 4.8 | 163 | 1 | 8 | -7 | 49 |

| 3.4 | 165 | 6.5 | 6 | 0.5 | 0.25 |

| 2.5 | 145 | 9 | 10 | -1 | 1 |

$$\rho\;=\;1\;-\;\frac{6\sum D^2}{N\;(N^2\;-\;1)}\\\\\rho\;=\;1\;-\;\frac{6\;\times\;119.5}{10\;(10^2\;-\;1)}\\\\=0.28$$

Null and Alternative Hypothesis:

The Spearman’s rho can be computed as a descriptive statistics. We do not carry out statistical hypothesis testing for descriptive use of rho. If the rs is computed as a statistic to estimate population correlation (parameter), then null and alternative hypothesis are required.

The null hypothesis states that

\(H_\circ:\;\widetilde{n_s}\;=\;0\)

It means that the value of Spearman’s correlation coefficient between X and Y is zero in the population represented by sample.

The alternative hypothesis states that

\(H_A:\;\widetilde{n_s}\;\neq\;0\)

It means that the value of Spearman’s rho between X and Y is not zero in the population represented by sample. This alternative hypothesis would require a twotailed test.

Depending on the theory, the other alternatives could also be written. They are either

\(H_A:\;\widetilde{n_s}\;<\;0\\\\or\\\\H_A:\;\widetilde{n_s}\;>\;0\)

The first alternative hypothesis, HA, states that the population value of Spearman’s rho is smaller than zero. The second HA denotes that the population value of Spearman’s rho is greater than zero. Remember, only one of them has to be tested and not both.

You can recall from earlier discussion that one-tailed test is required for this hypothesis.

Numerical Example: for Untied and Tied Ranks

Very obviously, the data on X and Y variables on are required to compute Spearman’s rho. If the data are on continuous variables then it need to be converted into a rankorders. The computational formula of Spearman’s rho (\(r_s\)) is as follows:

$$r_s\;=\;1\;-\;\frac{6\sum\;D^2}{n(n^2\;-\;1)}$$

where,

\(r_s\) = Spearman’s rank-order correlation

D = difference between the pair of ranks of X and Y

n = the number of pairs of ranks

Steps:

Let’s solve an example. We have to appear for entrance examination after the undergraduate studies. We are interested in correlating the undergraduate marks and performance in the entrance test. We have a data of 10 individuals. But we only have ranks of these individuals in undergraduate examination, and merit list of the entrance performance. We want to find the correlation between rank in undergraduate examination and rank in entrance. The data are provided in table below Since this is a rank order data, we can carry out the Spearman’s rho. (If the data on one or both variable were continuous, we need to transfer this data into ranks for computing the Spearman’s rho.)

Table: Data for Spearman’s rho

| Students | Rank in Undergraduate Examination (X) | Rank in entrance test (Y) |

|---|---|---|

| A | 1 | 4 |

| B | 5 | 6 |

| C | 3 | 2 |

| D | 6 | 7 |

| E | 9 | 10 |

| F | 2 | 1 |

| G | 4 | 3 |

| H | 10 | 9 |

| I | 8 | 8 |

| J | 7 |

The steps for computation of \(r_s\) are given below:

Step 1: List the names/serial number of subjects (students, in this case) in column 1.

Step 2: Write the scores of each subject on X variable (undergraduate examination) in the column labeled as X (column 2), and write the scores of each subject on Y variable (Entrance test) in the column labeled as Y (column 3). We will skip this step because we do not have original scores in undergraduate examination and entrance test.

Step 3: Rank the scores of X variable in ascending order. Give rank 1 to the lowest score, 2 to the next lowest score, and so on. In case of our data, the scores are already ranked.

Step 4: Rank the scores of Y variable in ascending order. Give rank 1 to the lowest score, 2 to the next lowest score, and so on. This column is labeled as \(R_Y\) (Column 5). Do cross-check your ranking by calculating the sum of ranks. In case of our data, the scores are already ranked.

Step 5: Now find out D = \(R_X\) – \(R_Y\) (Column 6).

Step 6: Square each value of D and enter it in the next column labeled as D² (Column 7). Obtain the sum of the D². This is written at the end of the column D². This ΣD² = 18 for this example.

Step 7: Use the equation (given below) to compute the correlation between rank in undergraduate examination and rank in entrance test.

$$r_s\;=\;1\;-\;\frac{6\sum\;D^2}{n(n^2\;-\;1)}$$

Table: Table showing the data on rank obtained in undergraduate examination and ranks in entrance examination. It also shows the computation of Spearman’s rho.

| Students | Rank in Undergraduate Examination (X) | Rank in entrance test (Y) | Rₓ | Rᵧ | D = Rₓ − Rᵧ | D² |

|---|---|---|---|---|---|---|

| A | 1 | 4 | 1 | 4 | -3 | 9 |

| B | 5 | 6 | 5 | 6 | -1 | 1 |

| C | 3 | 2 | 3 | 2 | 1 | 1 |

| D | 6 | 7 | 6 | 7 | -1 | 1 |

| E | 9 | 10 | 9 | 10 | -1 | 1 |

| F | 2 | 1 | 2 | 1 | 1 | 1 |

| G | 4 | 3 | 4 | 3 | 1 | 1 |

| H | 10 | 9 | 10 | 9 | 1 | 1 |

| I | 8 | 8 | 8 | 8 | 0 | 0 |

| J | 7 | 5 | 7 | 5 | 2 | 4 |

n = 10

ΣD² = 20

$$r_s = 1 – \frac{6 \sum D^2}{n(n^2 – 1)} = 1 – \frac{6 \times 20}{10(10^2 – 1)} = 1 – \frac{180}{990} = 1 – 0.1818 = 0.818$$

Now the Spearman’s rho has been computed for this example. The value of rho is 0.818. This value is positive value. It shows that the correlation between the ranks in undergraduate examination and the ranks in entrance test is positive. It indicates that the relationship between them is positively monotonic. The value of the correlation coefficient is very close to 1.00 which indicates that the strength association between the two set of ranks is very high. The tied ranks were not employed in this example since it was the first example. Now I shall introduce you to the problem of tied ranks.

Interesting point need to be noted about the relationship between Pearson’s correlation and Spearman’s rho. The Pearson’s correlation on ranks of X and Y (i.e., \(R_X\) and \(R_Y\)) is equal the Spearman’s rho on X and Y. That’s the relationship between Pearson’s r and Spearman’s rho. The Spearman’s rho can be considered as a special case of Pearson’s r.